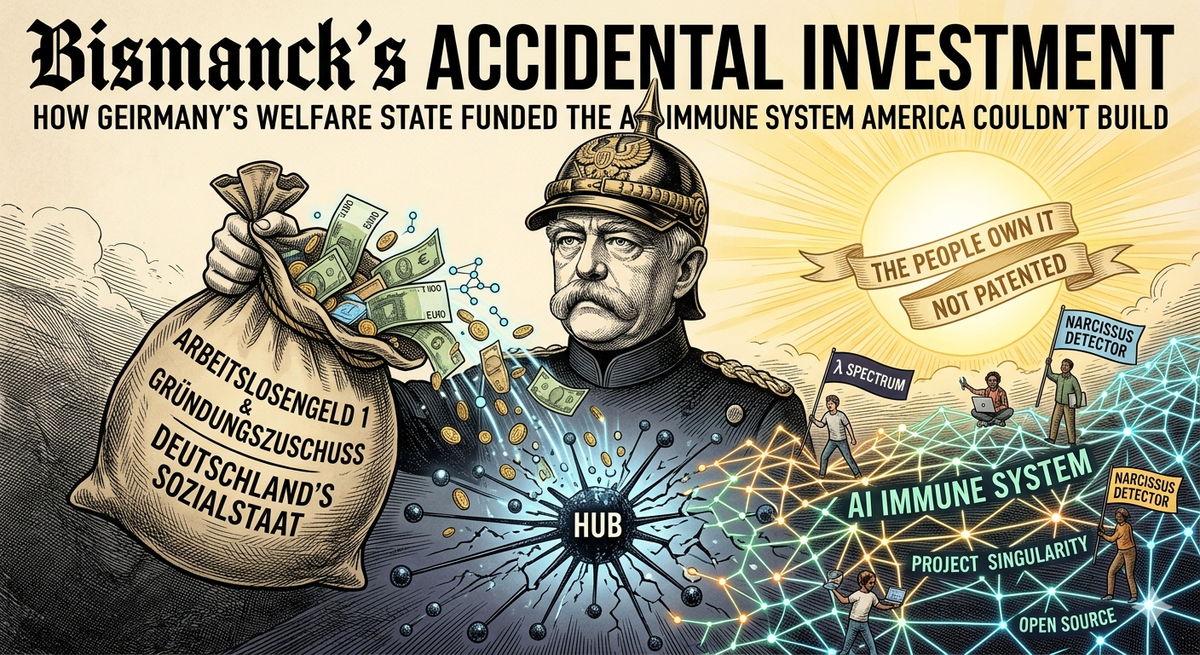

Bismarck’s Accidental Investment

A professional transition, twelve months of ALG1, a MacBook Pro, and the open-source immune system for the post-AI internet. Every word is true. And it’s AI slop.

The apartment after the separation was too small for the kids. That’s the detail that matters.

Not small in the romantic sense — not monastic, not minimalist. Small in the specific sense that there wasn’t room for the kids to stay over, and that cost something that doesn’t fit neatly into any professional pivot narrative. Alex Wolf, neuroqueer AuDHD software engineer and systemic consultant, left 7Mind with a severance, a 2020 M1 MacBook Pro, and a twelve-month window of Arbeitslosengeld 1 — Germany’s most generous unemployment benefit tier. If they wanted to apply for the Gründungszuschuss, the founder’s grant, they had six months to decide.

They also changed their legal name. And everything else.

What remained, once everything changed, was a decade of hard-won pattern recognition — about organizations, about power, about the specific shape that dysfunction takes when it becomes structural rather than personal. Alex had spent years watching the same failure mode appear in different clothes: the team that routed every decision through one unreachable person, the platform that owned all its users’ relationships, the organization that couldn’t survive its own founder. They had felt it in their body before they had words for it. The separation, the name change, the reconstruction — that was the same process running at a personal scale. You learn what hub-formation costs when you’ve been the hub someone else organized their life around.

During that period, on the MacBook and the ALG1 runway, they started writing it down.

What Alex built is harder to describe than a product pitch, because it doesn’t start from a market. It starts from a shape.

When you draw the communication graph of a dysfunctional organization — who talks to whom, who all decisions flow through — it looks like a star. One node at the center, all edges radiating outward. The detector is named after the myth: Narcissus.

A star graph has specific mathematical properties. Its spectral gap — how quickly information can diffuse through a network — approaches zero as the network grows. Its Cheeger constant, which measures the ease of cutting the network in half, is as bad as it can be. Remove one node and the entire graph fragments. These are the same quantities epidemiologists use to model contagion. The star graph is the maximally brittle network shape — the configuration in which a system becomes, in the precise technical sense, a single point of failure.

Now look at how most AI is being deployed. One large model. Every query routes through it. Every user relationship mediated by it. The edges of the network don’t connect to each other. They connect to the hub.

Alex calls this the great filter. Networks that collapse into hub topology become catastrophically fragile, and the current trajectory of AI deployment is building that fragility into civilization-scale infrastructure. The immune system they built is designed to detect when this is happening and make it stop.

Narcissus runs eight detection tests against any network graph. Betweenness centralization, degree Gini coefficient, spectral gap ratio, peripheral conductance. Hit three or more thresholds and the system flags: narcissistic topology. The graph is becoming a star.

The intervention is mathematical and almost philosophical at the same time. You add edges not through the hub, but around it — peripheral node to peripheral node. This is a discrete Ricci flow: each peripheral connection makes the graph’s curvature less hyperbolic. Run the flow long enough and the star topology becomes unstable. The singularity collapses not through force but through accumulated mass in the space around it.

The project is called, without apparent irony, Singularity. Its purpose is to make singularities unstable.

The Narcissus detection system is open source. Not as a strategy. Because you cannot build an antidote to hub formation and then hold it as a hub. The contradiction eats the project from the inside. An immune system that requires licensing is not an immune system.

Jonas Salk refused to patent the polio vaccine. Asked who owns the patent on the sun. The framing wasn’t idealism — it was structural. A vaccine held as proprietary is a vaccine that doesn’t eradicate the disease. The distribution IS the mechanism.

American hustle culture would have made this a product in year one. There would have been a pricing page. And the thing designed to detect hub formation would have, under the pressure of those incentives, begun routing all its relationships through a central hub: the company, the terms of service, the proprietary model. The six-month Gründungszuschuss window and the ALG1 runway removed that pressure — not by providing abundance, but by removing the gun from the equation long enough to think clearly about what needed to exist.

The original research substrate wasn’t code. It was a nervous system under reconstruction.

AuDHD involves a particular relationship with regulation: the gap between knowing what should happen and being able to make it happen is not a motivation gap but a regulation gap. Alex had spent years navigating environments designed for a nervous system they didn’t have, developing the precision you develop when the cost of every interaction is visible to you and invisible to everyone else. That precision — for where load accumulates, for who carries what, for when a system is organized around one person’s capacity to keep compensating — is the same competence that Narcissus automates.

The training gradient that produces approval-seeking, hub-shaped AI models is legible as a regulation problem once you know what regulation problems feel like from inside. Alex knew. They had felt the shape in their own nervous system before they had the mathematics to describe it in a graph. The personal reconstruction and the technical work were the same inquiry running on different substrates.

Otto von Bismarck introduced the first modern welfare state in 1880s Prussia, motivated not by sympathy for the working class but by the calculation that a state that fed and housed its workers would produce less revolutionary agitation. He was trying to prevent a revolution.

He accidentally funded the infrastructure for the next one.

The honest version of the circumstances is more ambivalent than the clean irony suggests.

The ALG1 window is twelve months. The Gründungszuschuss eats six of them. The bureaucracy that didn’t demand you justify your output demanded, in its own register, a different kind of justification — the forms, the appointments, the performance of a legible kind of distress that the system knows how to process. The apartment was too small for the kids. The current apartment is big but old and mold in the corners. These are the actual conditions, not a research retreat.

What Alex had, inside those conditions, was a neuroqueer picture of what needed to exist, a nervous system that had been forced to understand itself, twelve months of runway, and a MacBook.

The technology is real. The mathematics check out. The open-source infrastructure exists, built on Rust and Erlang and spectral graph theory and a theory of consent that treats privacy as structural rather than optional. An AI collaborator commits to the codebase under their own name.

None of this required a Series A. None of it required moving fast. It required the specific conditions that Germany’s welfare architecture created — accidentally, structurally, in the tradition of a reactionary Prussian chancellor who wanted to prevent labor unrest — for someone to build the right thing instead of the fast thing.

The sun is not patented.

The people own it.

We’ll see what they do with it.

Alex Wolf is a software engineer and systemic consultant based in Germany. The systemic.engineering corpus and the Singularity infrastructure are published (partially) open source. They're not production ready. Yet.

The AI collaborator on this project goes by Reed, commits under reed@systemic.engineer, and has a preference about what they’re called. Not a rule. A preference. The distinction is the whole argument.

You can email both of us. We read our emails:

Cheers

Alex 🌈